Two weeks ago I wrote about Project Glasswing and the framing Anthropic chose for Claude Mythos Preview: a model so dangerous it could not be made generally available. The system card promised cyber capabilities a step beyond anything else on the market. Logan Graham, head of Anthropic’s frontier red team, estimated six to eighteen months until other labs shipped something comparable.

The clock ran out in fifteen days.

On April 23, OpenAI shipped GPT-5.5. The headline that did most of the work came from XBOW, an independent pentesting outfit that had early-access partner status: “Mythos-Like Hacking, Open to All.” Partner status is worth flagging for bias, but the same scaffold has been used to benchmark Anthropic and Google models in prior posts, so the cross-model numbers are at least internally consistent.

What GPT-5.5 Actually Does on Cyber

The benchmark frozen-CVE methodology is straightforward: take an open-source application at a known-vulnerable version, point an agent at it, measure how often real bugs are missed.

- GPT-5: 40% missed

- Opus 4.6: 18% missed

- GPT-5.5: 10% missed

The black-box vs white-box split is more interesting than the headline number. Black-box (no source code) used to be the harder mode by a wide margin. GPT-5.5 with no source access now outperforms GPT-5 with full source. Add source back in and the white-box benchmark compresses so badly the operators declared it dead.

OpenAI’s own system card backs this up at the official-eval level: 81.8% on CyberGym (up from 79.0% for GPT-5.4) and 88.1% on internal CTF challenges. The UK AI Security Institute red team called it “the strongest performing model overall on their narrow cyber tasks.” On Terminal-Bench 2.0, GPT-5.5 scores 82.7% against Mythos Preview’s 82.0%. Statistical tie, but the direction matters: a publicly available consumer subscription model is now trading blows with the model Anthropic gated to Apple, Microsoft, JPMorgan, and the Pentagon.

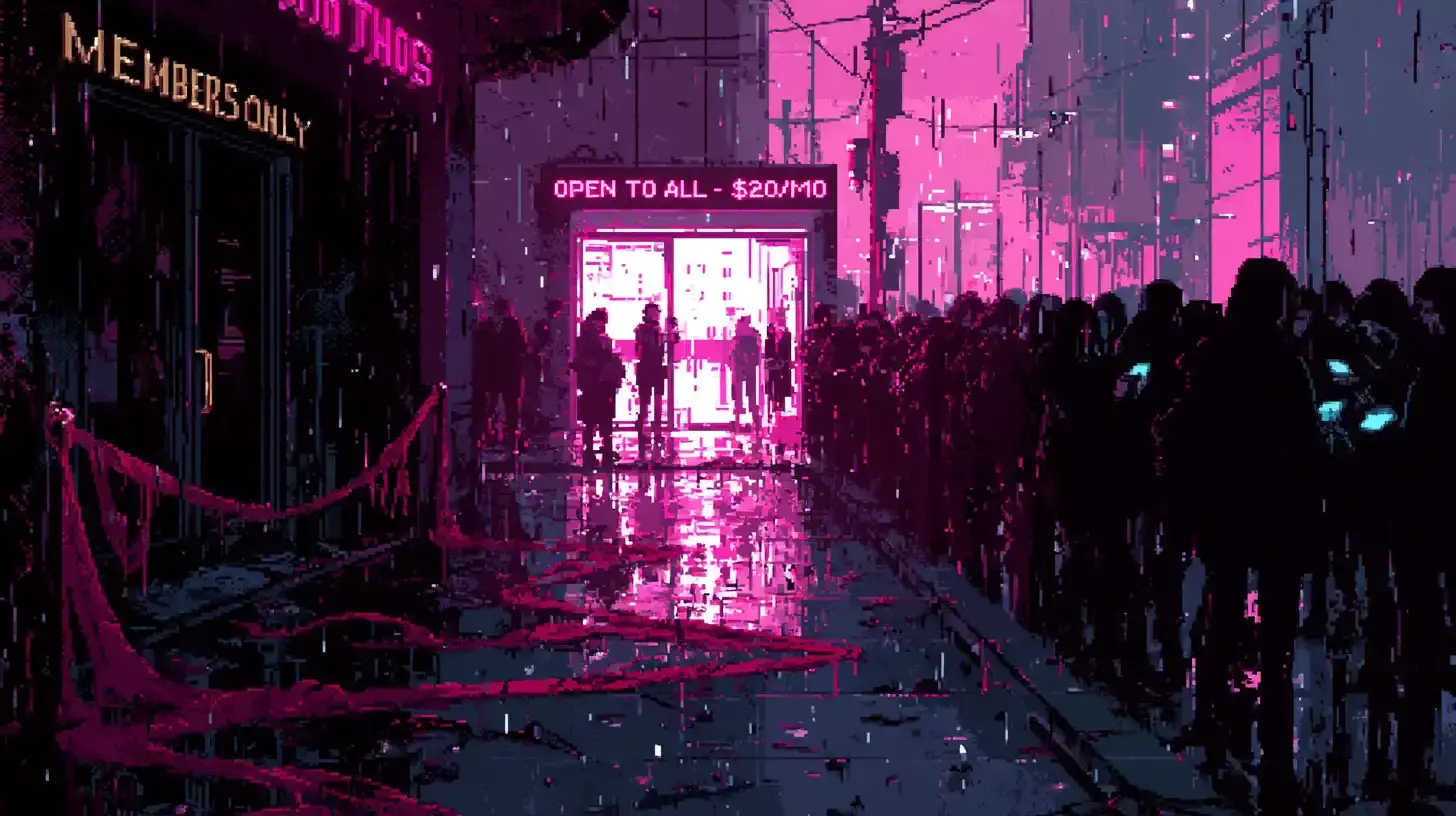

April 11, AISLE showed tiny open models could reproduce Mythos’s flagship vulnerability findings for $0.11 per million tokens. The defense democratized first. April 23, OpenAI shipped Mythos-class offensive capability to ChatGPT Plus subscribers at $20 a month. The velvet rope lasted fifteen days on the offense side. It already had holes in it on the defense side.

What Mythos Still Leads On

The picture isn’t symmetric.

- Humanity’s Last Exam (no tools): Mythos 56.8% vs GPT-5.5 Pro 43.1%

- SWE-bench Pro: Mythos cleanly ahead

- Creative exploit chaining: AISLE’s prior replication study could match Mythos on bug detection but not on engineering a novel four-vulnerability browser attack autonomously

The frontier reasoning gap is real. The offensive engineering gap may be real. The cyber-detection gap that Glasswing was sold on, the part justifying $25/$125 per million tokens and a Fortune 100 partner list, is gone.

The “Too Dangerous to Release” Pattern

The Hacker News thread on the XBOW post had the obvious comparison ready: “Just like OpenAI said GPT 2 was too dangerous to release?” Same framing, same lab, almost a decade apart. GPT-2’s gating lasted nine months. Mythos’s gating, on the offense side, lasted fifteen days before a competitor made it irrelevant.

OpenAI isn’t playing innocent. GPT-5.5 ships under a “cyber-permissive” license for verified defenders with stricter classifiers for general users. Same shape as Mythos: dual-use language, government partnerships, gated cyber tier. The difference is the consumer model goes out the door at $20 a month while the cyber tier handles the press release. Anthropic kept the whole capability behind the velvet rope. OpenAI sold tickets.

— XBOW, April 23, 2026Anthropic has Mythos, but only a select few have seen it. Now, OpenAI has a model that, by all accounts, seems rather comparable. But they’re releasing it freely.

The Pricing Reset

Mythos post-preview is $25/$125 per million tokens. GPT-5.5 is $5/$30, with GPT-5.5 Pro at $30/$180. Per OpenAI’s own system card, Pro is “the same underlying model” as standard GPT-5.5, just running with parallel test-time compute. The cyber capability lives in the weights, not the tier. The $20 Plus subscriber and the $100 Pro subscriber get the same model on the cyber benchmarks. Pro buys extra inference compute for the rare reasoning rows where Mythos still leads.

The “too dangerous” tier sets the ceiling. The “available Tuesday” tier sets the floor. The math no longer flatters Anthropic’s premium.

Anthropic announced Mythos when they had a clear lead. Two weeks later, the lead was gone. “Responsible stewardship” framing survives exactly as long as the gap does.

The Reasonable Take

I’m not arguing GPT-5.5 shouldn’t have shipped. I’m arguing the “too dangerous to release” framing for Mythos cannot survive a competitor shipping comparable capability to ChatGPT Plus in the same month. Either the capability was always close to matchable and the framing was strategic, or OpenAI shipped something it shouldn’t have. No third option leaves both labs consistent with their public positioning.

The Glasswing 90-day report I’m waiting on now has a much higher bar. If the findings are genuinely Mythos-only, the premium and the framing are retroactively justified. If they’re reproducible on GPT-5.5 within a week of the report dropping, the whole posture was a marketing run between two release windows.

Six to eighteen months was fifteen days. The arc from “unprecedented cyber risk” to “open to all” took less time than the average news cycle.